Contents

Advances in the development of artificial intelligence are motivating commercial and manufacturing companies to integrate new technologies into their business to gain competitive advantage. This is generating strong demand not only for high-performance hardware, but also for the IT services market. Many service providers in Europe already offer server rental services for machine learning and logical inference tasks.

Artificial intelligence tasks are solved in the context of large data volumes and high computing power. Only expensive server hardware can provide such conditions, so cost optimization at the stage of project launch is relevant even for large businesses.

How to select the right AI server for rent? What hardware and technologies do manufacturers offer for working with neural networks? Will a service provider help save resources and optimize costs? Answers to these and other questions you can find in our article.

Launching a project to work with artificial intelligence is a complex and multifaceted task. It is important not only to select the right hardware and service, but also to plan the budget competently. Specialists of our portal can help you with this. Select a convenient time and sign up for a free consultation.

Consultant on server hardware, network and cloud technologies Free 30-minute online consultation on renting, configuring and running GPU servers for AI

Virtual or local GPU server?

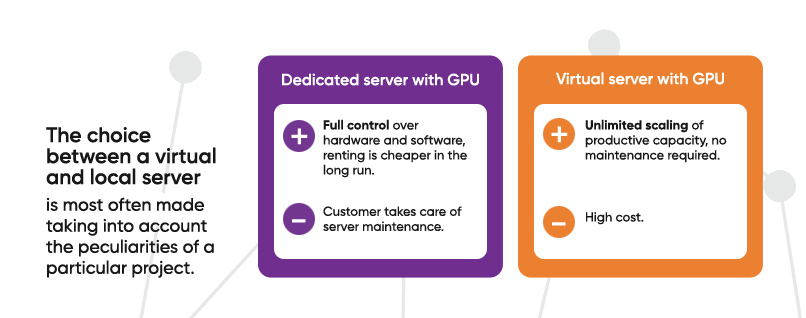

Service providers offer both virtual and dedicated servers for rent for machine learning and logical inference tasks. The choice between a virtual and local server is most often made taking into account the peculiarities of a particular project. Both options have their advantages and disadvantages.

Renting a dedicated server with GPU gives you full control over the hardware and software, which is a definite plus and can be an important condition for a business project. In addition, renting a dedicated server with high-performance hardware in the long term is cheaper than using a virtual machine with a similar configuration.

This option also has its disadvantages. First, the client has to take care of server maintenance: repairs, software updates, licensing issues, etc. Secondly, it is much more difficult to scale computing power on physical hardware.

Renting a virtual server with GPU allows you to focus on business tasks without being distracted by maintenance and software availability issues. In addition, it is possible to scale the performance capacity of a virtual server at the modern level of network technologies almost indefinitely.

The main disadvantage of renting a virtual server is its high cost. Hands-on experience shows that it is the financial aspect that often becomes decisive in favor of the client’s selection of local hardware.

In the absolute majority of cases, commercial companies rent a dedicated physical GPU server for working with artificial intelligence and place it in a data center. In this case, even at the stage of hardware design it is possible to create conditions for possible increase of computing power, and maintenance can be entrusted to the technical staff of the service provider. This approach allows you to save significantly when organizing a project using a dedicated AI server.

Selecting components for an AI server

Machine learning (ML) and deep learning (DL) involve the use of complex models on large amounts of data. To accomplish this task, servers are equipped with powerful GPU processors capable of parallel computing.

As hardware for working with artificial intelligence is becoming more and more in demand, the world’s leading manufacturers are developing new products for artificial intelligence tasks. In particular, there are new GPU processors optimized for training neural networks and server models with architecture corresponding to new tasks.

This simplifies the process of server assembly for a specific project, but the need to optimize resources is still relevant. Even at the design stage, it is possible to determine the most suitable components and at the same time take into account the recommendations of manufacturers.

Processor

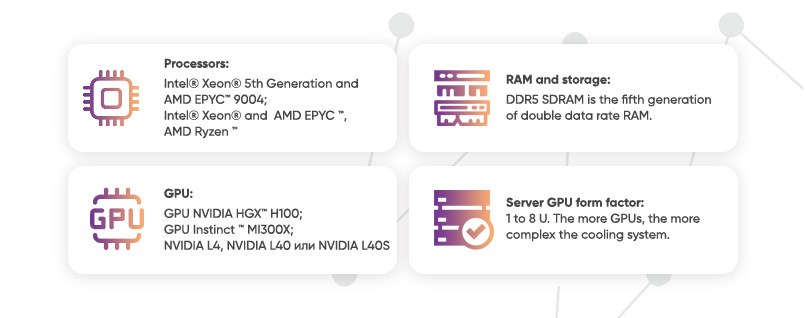

So far, Intel® Xeon® 5th generation processors and AMD EPYC™ 9004 processors hold the undisputed leadership in server architectures for neural network training. In the first quarter of 2024, these are the best solutions among CISC-based x86 processors. They’re a good fit when you need great performance combined with a robust proven ecosystem.

If the budget is limited, you can consider earlier versions of Intel® Xeon® and AMD EPYC™ processors. The AMD Ryzen™ series of processors may also be a good choice for entry level.

Modern server solutions may involve one or two processor sockets. A dual-processor solution offers higher performance and availability, but higher power consumption and more sophisticated thermal management systems are required, which adds additional costs.

GPU

Some modern GPUs are specifically optimized by manufacturers for machine learning applications.

If you need to maximize processing power without compromising on cost, NVIDIA HGX™ H100 GPU is the best option for now. High-density modules for four and eight processors are often integrated into liquid-cooled enclosures to maximize the potential of these chips. Eight NVIDIA HGX™ H100 GPUs can deliver an impressive 32 petaFLOPS of FP8 deep learning performance.

A powerful alternative to H100 is AMD Instinct™ MI300X GPU. The feature of this chip is a huge amount of memory and very high bandwidth, which is important for LLM AI (large language model AI). A well-known LLM such as Falcon-40 with forty billion parameters can run on just one MI300X accelerator.

If the amount of data that will be used to train the AI is not too large, there is no point in chasing maximum performance. In this case, you can save a little money and build a server using NVIDIA L4, NVIDIA L40 or NVIDIA L40S GPUs. These chips are also recommended by the manufacturer for working with neural networks.

All above listed GPUs are equally well suited for both machine learning and logical inference tasks. The choice in favor of one or another GPU should always be made in accordance with the project’s technical requirements and financial capabilities

RAM and storage

The most advanced type of RAM is still DDR5 SDRAM: the fifth generation of RAM with double data transfer rate. Compared to previous generations, DDR5 has higher capacity, bandwidth and data transfer rates. No matter what kind of AI server you have, a single DIMM RAM module will never be enough. Some servers have up to 48 DIMM slots. The total amount of RAM is selected based on the project requirements, but 512 to 1024 GB RAM is most often needed for industrial solutions.

While RAM stores data for immediate use, data storage (storage) stores information until the user deletes it. NVME-standard SSDs with the latest NVMe Gen5 interface are used while building a server. They have the fastest transfer speeds and lowest latency. The total data storage capacity will also directly depend on the technical requirements of the project.

GPU Server Form Factor

The form factor defines the number of spaces in a server rack that are required to accommodate the hardware. Server size is measured in mounting units called Units, abbreviated U. Since the units in the rack are placed on top of each other, the width of the server will always be standard (unless it is a Tower form factor), but the height will directly depend on the number of U.

For AI work, servers are most often designed with heights between 1 and 8 U. As a rule, the more GPUs are involved in the architecture, the more complex the cooling system is, the more space the server takes up.

A single unit can accommodate up to 4 GPUs in most cases. Servers with a height of 4U can accommodate up to 10 GPUs. At the same time, these figures are approximate, much depends on the type of GPUs used. There are 2U servers that support up to 16 single-slot GPUs.

When selecting a form factor, note that the cost of renting a server rack in a data center will directly depend on the height of the selected server (the number of U’s in the specifications). The higher the number of U’s, the more expensive it will cost to rent it.

Technical and service maintenance of GPU servers for AI

It is important to understand that building a server does not equal a successful launch of the project. Rented hardware requires maintenance and service. Hosting companies often offer services that will help optimize resources, significantly reduce rental and maintenance costs.

- “Renting GPU server” service in the best option implies a full range of services from the provider: free development of server architecture, purchase of hardware, connection and configuring.

- Some service providers also provide free server migration services or relocating to a new data center if the client is ready to sign a lease agreement or use Colocation service.

- Many hosting companies are ready to sign a lease agreement with the client’s right to buy the hardware if necessary. The leasing agreement is concluded for 2 years or more.

- An additional contract for maintenance of the dedicated server relieves the business from the need to independently solve the issues of repair, licensing, correct operation of hardware and software. All these issues fall entirely on the provider. Consequences of possible incidents are minimized, and the client receives regular reports on server and software operation.

- Post-warranty service on a subscription basis is another service that may be in demand when renting a server with GPU. The hoster will replace any components, including the most expensive ones, free of charge, provided the client pays a monthly subscription fee. The cost of this service, as a rule, is from €30 per month.

When concluding a contract for renting a GPU server for work with AI, it is important to pay attention to security issues and the quality of the technical support service.

Placing the hardware in a Tier 3 data center is the best choice in terms of security. In Tier 3 data centers, advanced ventilation and fire suppression systems are used, regular security checks are conducted, and access to server rooms is possible only by biometric data. The server itself is housed in a locked cabinet with an individual power meter.

The quality of the service provider’s support team is also of utmost importance. If the hoster accepts service requests only by e-mail and processes them for many hours or even days, it is a serious reason to refuse cooperation. All issues related to the maintenance of GPU server must be solved promptly, it directly affects the performance of the server and the success of the project as a whole. The ability to contact technical support in messengers will be a good advantage if the hoster is ready to provide this level of service.

Renting GPU server for machine learning. Selecting components and service

Write us a message to get additional information.

or book a free consultation

Designing AI server is a complex engineering task, which is not always possible even for experienced specialists. Technologies change very quickly, individual components sometimes become obsolete in 6-7 months. If you want to understand which configuration will be optimal for your business project and what bonuses you can get from the service provider, leave a request for a free online consultation.

Article author Olga Boujanova Consultant on server hardware, network and cloud technologies